Why This Matters Now

Class 3

irreversibility, physical actuation

0 ms

rollback window after actuation

Gemma 4

runs on-device, offline, with function calling

Open

HDP-P spec, CC BY 4.0, IETF draft to follow

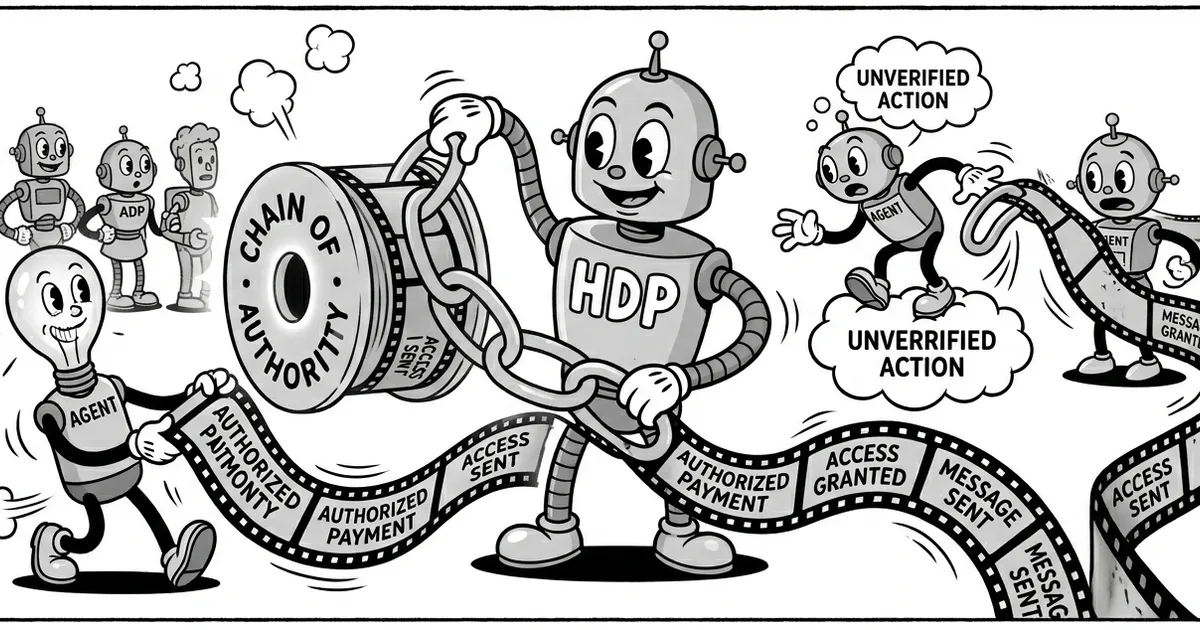

HDP-P middleware intercepting a malicious Class 3 actuator command in a Gemma 4 pipeline. Also available as an interactive demo on Hugging Face.

Gemma 4 shipped in April 2026. The 4B Effective model runs on a Jetson Nano. It does structured JSON function calling. That is not a research demo. That is a product reality available today, and it means the gap between “AI agent that can act” and “AI agent that can actuate a physical system” has closed.

The security model for that gap does not yet exist at any scale. When an AI model drives a software tool, a bad output usually means data loss, account compromise, or a fraudulent transaction. Recoverable, with work. When that same model drives a robot arm, a dispensing system, or an industrial relay, the recovery window is seconds. Sometimes zero.

HDP-P (Human Delegation Provenance, Physical) is an open protocol built for exactly this scenario. It puts a cryptographic authorization layer between the model's function calls and the physical actuator interface. The live demo on Hugging Face shows it working in real time against a Gemma 4 pipeline.

The New Attack Surface Nobody Is Talking About

The LLM security conversation has been dominated by prompt injection, data exfiltration, and jailbreaks. All real. All solvable with output filtering, sandboxing, and careful tool scoping. The physical AI agent threat is different in kind, not just degree.

Consider the standard prompt injection framing: an attacker embeds malicious instructions in content the model reads, the model acts on those instructions, data gets exfiltrated or an account gets compromised. OWASP LLM01. Documented, mitigated, monitored.1

Now place that same model inside a delivery robot. Or a dispensing machine in a hospital. Or a quality-control arm on a manufacturing line. The injected instruction does not exfiltrate data. It calls dispense_fluid() or command_vehicle(). The rollback window is not the time it takes your SOC to respond. It is the time it takes the actuator to complete its movement.

- Irreversibility is physical, not logical. A deleted file has a backup. A dispensed chemical, an applied force, or a moved mechanism does not.

- The exploit is the function call itself. No vulnerability required. If the model calls the tool and the tool is wired to an actuator, the attack succeeds the moment the call is made.

- Offline deployments remove the safety net. Edge AI on Jetson Nano or Raspberry Pi often runs without cloud connectivity. No real-time policy enforcement from a remote service.

- Fleet scale multiplies impact. A single compromised delegation token that propagates to a robot cluster is not one incident. It is simultaneous incidents across every device in the fleet.

How HDP-P Works

HDP-P derives from the IETF internet-draft draft-helixar-hdp-agentic-delegation-00, the base HDP protocol for agentic AI delegation. The physical extension adds four things the base protocol does not address.

HDP-P: Four Additions for Physical AI

Irreversibility Classification (Class 0, 3)

Every tool call is assigned an irreversibility class. Class 0 is read-only. Class 3 is physical actuation. Delegation tokens carry a maximum class ceiling. If the requested action exceeds the ceiling, the middleware blocks it regardless of the model's confidence.

Embodiment Context

Tokens are bound to a specific hardware ID. A token issued for one device cannot be replayed against another device in the fleet. Fleet-lateral movement requires a fresh authorization.

Policy Attestation

The deployed model's weight hash is included in the token. If the model on-device does not match the authorized model, the token is invalid. This prevents weight-substitution attacks against offline deployments.

Pre-Execution Audit Records

Every middleware decision, pass or block, is written to an audit log before any actuator command is issued. If the device loses power mid-sequence, the audit record exists for every attempted command.

The Gemma 4 Demo

The HDP-P physical demo on Hugging Face runs the middleware against a Gemma 4 function-calling pipeline. You can send authorized commands, watch them pass, inject out-of-scope or Class 3 commands, and watch them get blocked at the delegation layer, all before any simulated actuator receives input.

The flow is straightforward. A human principal generates an Ed25519 keypair and issues a delegation token specifying permitted tools, maximum irreversibility class, token lifetime, and embodiment binding. The middleware takes the public key only. When Gemma 4 generates a function call, the middleware verifies the token signature, checks scope membership, confirms the irreversibility class, and validates the hardware binding. Pass or block, the decision is logged before execution.

“A token that expires in five minutes and is bound to a specific Jetson Nano means the attack window for a compromised session is five minutes and one device. That is not zero, but it is a very different problem from an unbounded delegation to an entire fleet.”

The video walkthrough on the Helixar Labs page shows the middleware blocking a malicious Class 3 injection in a live Gemma 4 pipeline. The physical actuator receives no command. The audit record shows exactly what was attempted, by which token (expired, in this case), and when.

Why Gemma 4 Is the Forcing Function

Every model before Gemma 4 that could do reliable structured function calling was too large for most edge deployments, or required cloud connectivity, or was not open weight. The Gemma 4 E2B variant changes this. It runs on modest hardware, offline, with function calling that is accurate enough to wire directly to real tool interfaces.

The robotics and industrial automation industries have been waiting for exactly this model. Not a model that “almost” works for function calling. A model where the output is structured enough that you can build a production tool-dispatch layer on top of it. Gemma 4 E2B is that model. Integrators are deploying it now.

For most edge AI deployments today, the authorization model between the LLM and the physical interface is whatever the integrator wired up at prototype time. In most cases, that is a direct function call with no token, no scope check, and no audit record. The model output is the authorization. That worked when the model was behind a cloud API. It does not work when the model sits inside a device that has a motor attached to it.

The Specification

The HDP-P specification is currently published on Zenodo (DOI: 10.5281/ZENODO.19332440) and the base HDP protocol as an IETF internet-draft. HDP-P itself is not yet an IETF draft. Reference Python implementations are on GitHub. The HDP-P specification is published under CC BY 4.0. Implementations, forks, and commentary on the base HDP draft and the physical extension are welcome.

Edge AI deployments are happening. Physical AI agents are being wired up today, in logistics, in manufacturing, in healthcare, and in consumer robotics. The window to get the authorization model right is before the fleet ships, not after the first incident.

References

- OWASP LLM Security Top 10, LLM01: Prompt Injection. owasp.org/www-project-top-10-for-large-language-model-applications

- Google Gemma 4 Model Family. blog.google/technology/developers/google-gemma-4

- IETF Internet-Draft: draft-helixar-hdp-agentic-delegation-00. datatracker.ietf.org/doc/draft-helixar-hdp-agentic-delegation

- HDP-P Physical AI Specification. Zenodo. doi.org/10.5281/ZENODO.19332440

- HDP-P Physical Defense Demo (Hugging Face). huggingface.co/spaces/helixar-ai/hdp-physical-demo

- NVIDIA Jetson Nano Developer Kit. developer.nvidia.com/embedded/learn/get-started-jetson-nano-devkit